|

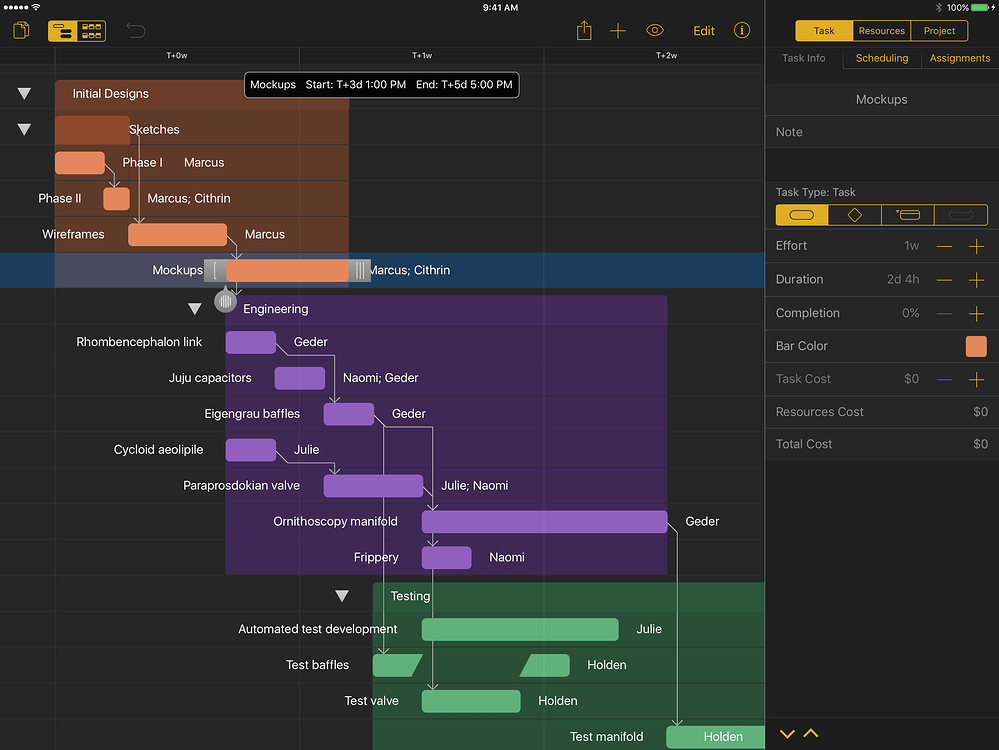

1/24/2024 0 Comments Omniplan 1.5Data in custom columns now exports to Microsoft Project We’ve also built in an automatic sync mechanism. Work out a plan even faster with hardware keyboard shortcuts on iPad. We also demonstrate the video manipulation properties of our generator, like projecting a video into its latent space using just a single frame and CLIP-based editing.This episode of Focused is sponsored by: Chapter 4 A free plan is available also upon registration. Our modifications greatly improve the training efficiency of our model and we achieve strong state-of-the-art results on FaceForensics \(256^2\), Sky Timelapse \(256^2\), UCF-101 \(256^2\), Rainbow Jelly \(256^2\) and MEAD \(1024^2\). Finally, we demonstrate that a state-of-the-art video generator could be trained with a very sparse sampling scheme, using just 2-3 frames per clip. Then, we drop the usage of expensive Conv3d layers and aggregate the temporal information across frames by simple concatenation. First, we redesign the motion codes to be continuous by structuring them as acyclic positional embeddings. It is based on StyleGAN2 and we rethink fundamental components of video synthesis models. We build a non-autoregressive video generator which is continuous in time. Ivan Skorokhodov, Sergey Tulyakov, Mohamed Elhoseiny StyleGAN-V: A Continuous Video Generator with the Price, Image Quality and Perks of StyleGAN2 It obtains state-of-the-art image quality, high-fidelity geometry and trains \( 2.5 \times\) faster than the upsampler-based counterparts. The resulted model, named EpiGRAF, is an efficient, high-resolution, pure 3D generator, and we test it on four datasets (two introduced in this work) at \(256^2\) and \(512^2\) resolutions. We revisit and improve this optimization scheme in two ways: 1) by designing a location- and scale-aware discriminator to work on patches of different proportions and spatial positions and 2) modifying the patch sampling strategy based on an annealed beta distribution to stabilize training and accelerate the convergence. Instead, we show that it is possible to obtain a high-resolution 3D generator with SotA image quality by simply training the model patch-wise. This solution comes at a cost: it break multi-view consistency and learns the geometry in a low resolution.

In the past several months, there appeared 10+ works that speed up NeRF-based GANs by training a separate 2D decoder to upsample a low-resolution 3D representation produced from the NeRF generator. Ivan Skorokhodov, Sergey Tulyakov, Yiqun Wang, Peter Wonka We explore our model on four datasets and demonstrate that 3DGP outperforms the recent state-of-the-art in terms of both texture and geometry quality. Our model is based on three new ideas: 1) using an off-the-shelf depth estimator to guide the learning of 3D geometry 2) a flexible learnable camera generator and a regularization strategy for and 3) knowledge distillation into the discriminator to transfer the external knowledge from a pre-trained feature extractor. In this work, we develop a 3D generator with Generic Priors (3DGP): a 3D synthesis framework with more general assumptions about the training data, and show that it scales to very challenging datasets, like ImageNet. This makes them inapplicable to diverse, in-the-wild datasets of non-alignable scenes rendered from arbitrary camera poses. Ivan Skorokhodov, Aliaksandr Siarohin, Yinghao Xu, Jian Ren, Hsin-Ying Lee, Peter Wonka, Sergey TulyakovĮxisting 3D-from-2D generators are typically designed for well-curated single-category datasets, where all the objects have (approximately) the same scale, 3D location and orientation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed